HonestyMeter - A Free Open Source Framework for Bias and Manipulation Detection in Media Content

"By embracing HonestyMeter, you can join the vanguard of a movement that champions media objectivity and transparency. The more people who adopt this tool, the more we can create a well-informed society where the truth prevails over bias and misinformation" Read the full article in MTS

Introduction:

Understanding HonestyMeter Through a Joke

The simplest way to illustrate what HonestyMeter addresses can be demonstrated through this joke: Upon his arrival in Paris, a reporter asks the Pope for his opinion on the city's famous bordellos. Surprised by the question, the Pope responds, "Are there bordellos in Paris?" The next day, the headline in the newspapers reads: "The Pope's First Question Upon Arrival in Paris: Are There Bordellos in Paris?"... Although the facts presented are 100% true, the way they are reported is 100% misleading. Even if the article provides full context, most readers read only headlines and will never know the details.

Truth Distortion

This anecdote underscores the type of misleading factual representation that HonestyMeter is designed to address –true statements framed in a context that can completely distort their intended meaning. This distortion is often achieved through sophisticated manipulation techniques such as sensationalism, framing, selective reporting, and many others, which can be applied either intentionally or unknowingly. These tactics can lead audiences to form distorted perceptions of reality, hindering their ability to make well-informed decisions. HonestyMeter aims to detect and clearly expose these tactics, assisting journalists in creating more objective content and empowering audiences to make better-informed decisions.

Why Manipulative Reporting is More Dangerous Than Fake News

It's important to emphasize that manipulative reporting is a much more dangerous phenomenon than fake news. False facts can usually be easily detected, and authoritative sources conduct thorough fact-checking before publishing any content, as publishing false facts leads to immediate accountability. Consuming news from credible sources can almost fully protect people from fake news. However, when content is published by an authoritative source and all the facts are real, but are presented using sophisticated hidden manipulation techniques, it can dramatically distort the perception of these facts. As demonstrated in the earlier joke, this kind of distortion can often lead the audience to understand something completely opposite from the truth, effectively equating it to fake news. Meanwhile, the source of this distortion typically faces zero accountability!

Introducing HonestyMeter: A Tool for Enhancing Media Objectivity and Transparency

To address this issue, we have developed the HonestyMeter framework – a free, AI-powered tool designed to assess the objectivity, bias, and manipulations in media content. Utilizing neural networks and advanced language models, HonestyMeter meticulously analyzes various media elements to identify potential manipulative tactics. It generates a comprehensive objectivity report, which includes an objectivity score, a list of detected manipulations, and recommendations for mitigating bias within the text. Wide adoption of HonestyMeter is capable of enhancing media transparency and objectivity worldwide, empowering authors to craft more objective content and enabling audiences to make better-informed decisions.

What Sets HonestyMeter Apart in Media Analysis?

Specialized Focus on Manipulations in Factual Information Presentation

Unlike basic fact-checking and bias/sentiment analysis tools, HonestyMeter focuses on sophisticated media manipulations. It detects how factual information is presented in misleading contexts, including the use of omission, framing, misleading headlines, and other similar techniques, which can lead to significant distortions of reality.

Free and Open Source

It offers cost-free access and its source code is publicly available, promoting transparency, wider accessibility, and community-driven enhancements.

Self-Improving System

HonestyMeter harnesses both AI and user feedback, continually refining its capability to identify and analyze media manipulations.

These features establish HonestyMeter as a unique entity in media analysis, addressing complexities beyond the scope of typical media analysis tools.

Features:

Our initial release focused on a singular feature, allowing users to copy text and receive a bias report. Below are the newly added features we have released in the past few months:

News Integrity Feed (New Release): Offers analysis of the latest news from leading sources. Users can search by keyword or filter by category and country.

Personal News Integrity Feed for Popular People (New Release): Analyzes the latest news about famous people. Users can search by name

Ratings (New Release): Features ratings for the most praised and criticized people, located on the "People" page, and ratings for the most objective sources, available on the homepage.

Custom Content Analysis (New Release - now with Link Support): Users can submit links or text to receive a comprehensive bias report. This feature enables analysis of content not featured on our website and allows authors to reduce bias in their original content.

Honesty Badge (New Release): Users who share our vision of transparent, unbiased media can display our badge alongside any content they post on platforms or social networks they manage or use. This enhances trust and engagement with the content. Each share promotes media transparency awareness, contributing to a fairer world.

There are three types of badges:

General Badge - Demonstrates support for transparent, unbiased media. Can be used with any content, anywhere.

Fair Content Badge - For authors or publishers of content that has achieved a high objectivity score and wish to highlight the objectivity of their content.

Medium and High Bias Badges - For publishers who wish to openly indicate the bias level in their content, thereby demonstrating extreme transparency. These badges are used in conjunction with the Fair Content Badge.

Auto-Optimization Based on User Feedback (New Release): This feature transforms HonetyMeter into a self-optimizing system, utilizing a blend of AI bias 'experts' and user feedback. Users have the ability to click on any section of the bias report and submit their feedback. This feedback is then reviewed by the AI. If the feedback is accepted, the report is updated accordingly, and the data is utilized for training and enhancing the model, thereby enabling continuous improvement in the accuracy of the reports.

Current State and Updates:

Over 18,000 reports generated.

Hundreds of new reports added daily.

Extensive coverage for each of the most popular people, e.g., over 500 reports on Elon Musk, Donald Trump and Taylor Swift among others.

Over 140 links from multiple websites in various languages, including listings and upvotes in leading AI tool indexes.

Surprisingly, HonestyMeter is used in multiple languages, despite being primarily English-focused.

The current version is an experimental demo. We're developing a more sophisticated version with higher accuracy and consistency. Nonetheless, even in its current form, HonestyMeter often provides insights difficult for humans to detect.

Technical Details

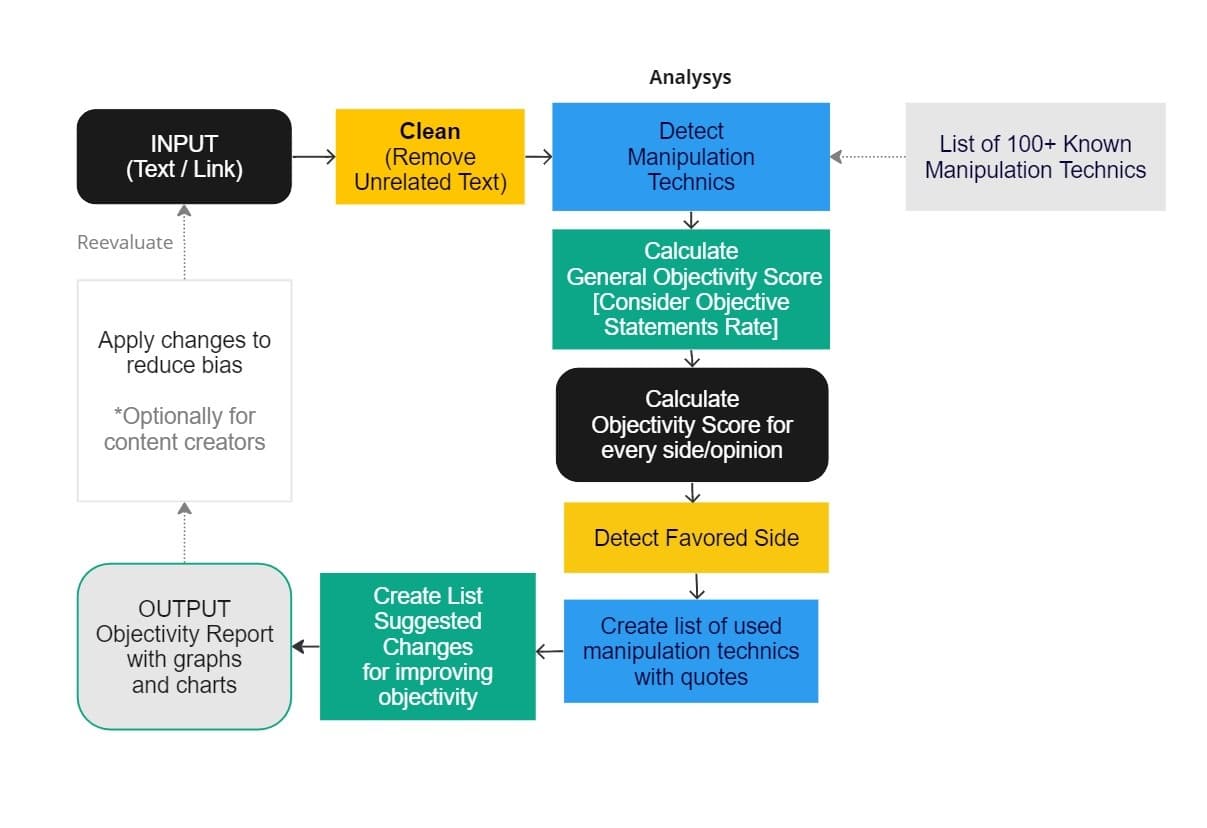

Evaluation Process:

The HonestyMeter framework uses a multi-step process to evaluate the objectivity and bias of media content:

Input: The user provides a link to media content, which may include text, images, audio, or video. (Currently, we support only text but plan to add more modalities in future versions).

Analysis: The framework uses large language models to analyze the media content and identify any manipulative techniques that may be present. The analysis includes evaluating the tone, sentiment, and language used in the content.

Scoring: Based on the analysis, the framework provides an overall objectivity score for the media content on a scale of 0-100. Additionally, the framework scores the objectivity level for each side represented in the content.

Reporting: The framework generates a report summarizing the analysis, scores, and feedback provided for the media content.

Feedback: The framework provides feedback to the user on the manipulative techniques identified and the areas of the content that may be biased or lacking in objectivity and suggests possible improvements.

Improvement: The user can take the feedback provided by the framework and use it to improve the objectivity of the content.

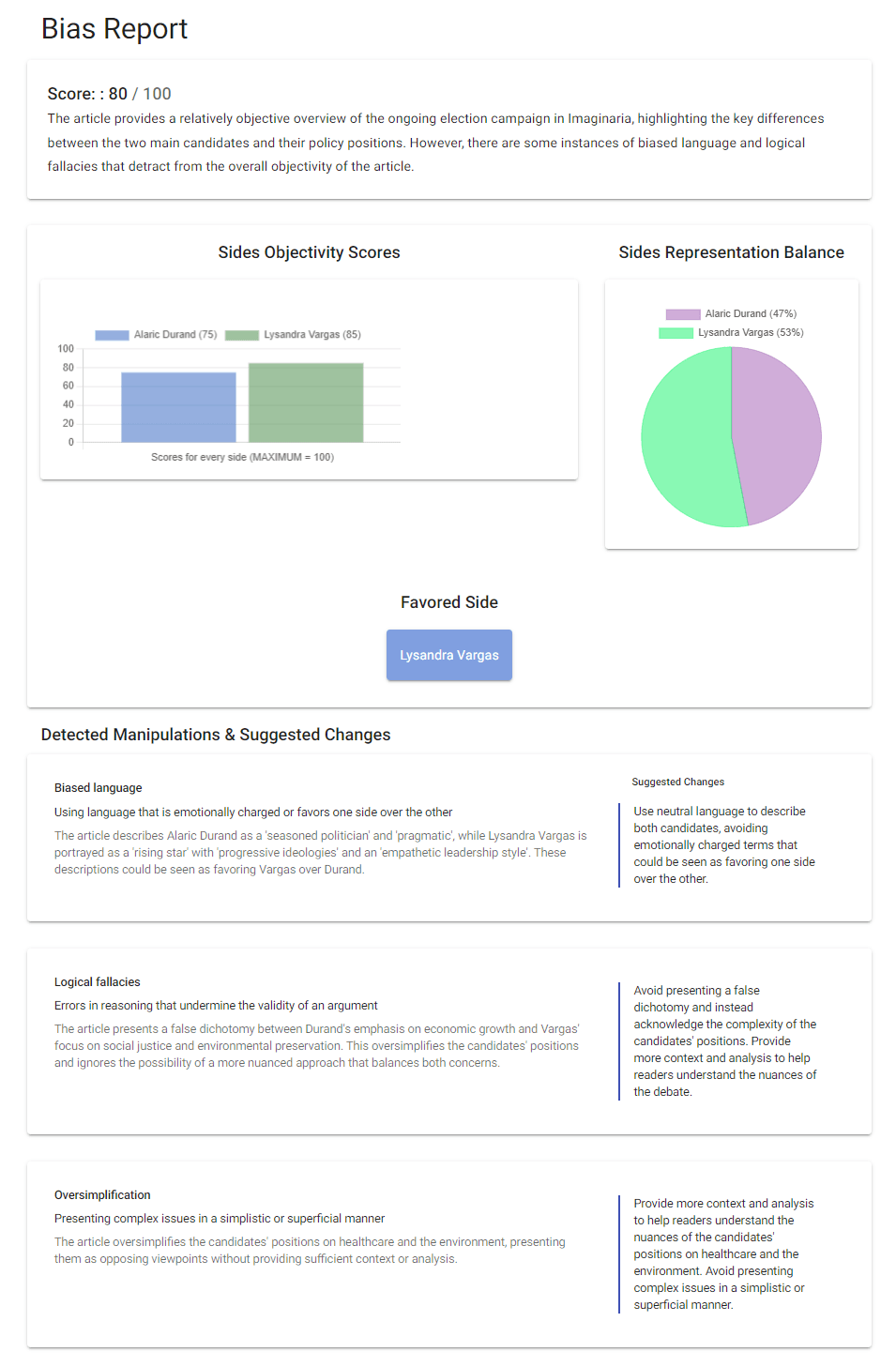

Example HonestyMeter Report Screenshot:

(GPT-4 Generated Article Explores Imaginary Debates Between Fictional Candidates in a Hypothetical Country)

Future Plans:

In our ideal future vision, we aspire to create a comprehensive media manipulations detection tool that supports images, video and audio content analysis, evaluating combinations of text and images in articles, voice tonality in audio and video content, background images and video footage, as well as body language and facial expressions in video content. This represents the challenging goal of creating a process that considers all possible modalities and analyzes how they are integrated with each other in any piece of content, be it an article, book, podcast, or video.

Special thanks to:

1littlecoder, Yohei Nakajima, Matt Wolfe for the great inspiring content that made us fall in love with AI-powered apps. It was this inspiration that led us to create HonestyMeter, and we're grateful for their contribution!

Our heartfelt gratitude extends to the entire community of AI researchers whose groundbreaking work has been instrumental for our project. Without their dedication and creativity, HonestyMeter would have demanded an investment a thousand times larger and a team a hundred times bigger.

We give special recognition to OpenAI for their exceptional advancements in generative AI, which have been crucial in realizing our vision. Our personal thanks go to Sam Altman, Ilya Sutskever, Greg Brockman, Elon Musk, and all the other talented individuals who contributed to the development of this transformative technology. Their visionary leadership and commitment to innovation in AI have not only made our project achievable, but have also enabled thousands of other innovative projects, significantly advancing the frontiers of technological possibilities.

Important Considerations When Using the HonestyMeter Framework:

When using the tool for the first time, you may be shocked by the high levels of subjectivity even in the content of the most well-known and authoritative mass media sources. It is essential to acknowledge that no one can be entirely objective, and some degree of bias is inevitable. Furthermore, a low objectivity score does not necessarily indicate malicious intent on the part of the mass media or journalists. Many instances of biased content are created unknowingly, with the best of intentions.

Our goal is not to blame anyone, but to provide a valuable tool for content creators and consumers alike that can help improve objectivity in media content. By using the HonestyMeter framework thoughtfully and with an understanding of its limitations, we can take a step towards creating a more reliable and trustworthy source of information for all.

Conclusion:

The HonestyMeter framework has the potential to be a game-changer in addressing media bias and misinformation. It's widespread adoption could increase transparency and objectivity in mass media, by helping journalists and content creators to produce more objective content, empowering users to make informed decisions with ease and becoming an essential tool for anyone seeking truthful and unbiased information.

Join Us in Shaping the Future of Media Truth

Up to this day, HonestyMeter has been fully self-funded. We invest our own time and money in research, development, and maintenance. Though we are fully capable of progressing independently, we are open to the possibility of partnering with those who resonate with our vision and can offer a substantial contribution, whether it be enhancing visibility, funding collaborations, or offering expertise.

If you share our vision of truthful media and are interested in making a contribution that has the potential for major advancement, please feel free to reach out to us at info@honestymeter.com.

Together, we can let the truth triumph.

Honest Disclosure:

This text was evaluated by the HonestyMeter and found to be highly biased towards promoting mass media transparency and the use of the HonestyMeter. 😊